Yesterday I watched the video recommended by Furious Hans about the interview with Adam Green. Green is the author of The Jesus Deception: A Mystical Midrashic Myth, recently released, which aligns with what we’ve been saying on this site about the non-historicity of Jesus.

Yesterday I watched the video recommended by Furious Hans about the interview with Adam Green. Green is the author of The Jesus Deception: A Mystical Midrashic Myth, recently released, which aligns with what we’ve been saying on this site about the non-historicity of Jesus.

Like David Skrbina (Kevin MacDonald published an article reviewing Skrbina’s book some time ago), Green believes that the New Testament was a kind of Jewish psyop against the Gentiles. It caught my attention, and I immediately got access to The Jesus Deception via Amazon, in its Kindle version. But we must make it clear that Green is not a National Socialist.

As is evident from the interview suggested by Furious Hans, both the interviewers and Green himself are still neochristians in the sense of having qualms about expelling all non-whites from North America (and from Europe, Australia, and New Zealand where, incidentally, Peter Jackson filmed The Lord of the Rings trilogy). Although one of Green’s interviewers said he was reading Nietzsche, he apparently hasn’t read neo-Nietzscheans like William Pierce, particularly regarding The Turner Diaries (fiction) and Who We Are (nonfiction). Both deal with ethnic cleansing as a moral goal. Recently, a Twitter user liked my praise of Pierce and said he was publishing his works. I asked if Who We Are was among them and he replied that it wasn’t yet. (Does this explain why my priority is founding a publishing house when some priests of the sacred words are already living together in the country where I live?)

Green, as I was saying, still has scruples based on Christian ethics. It reminds me a bit of what I once said to the vlogger from MythVision: that even though he dedicated his YouTube channel to exposing the lack of historicity in biblical narratives, he and his interviewees still adhered to Christian ethics.

I must accept the fact, and it’s incredibly difficult for me to do so, that those who have crossed the psychological Rubicon are very rare because that implies thinking like Himmler (that is: transvaluing values to the times of Titus or Hadrian when whites didn’t feel guilty about the genocide of Jews during Rome’s wars against Judea). I must understand that racialists are stuck in the middle of the river, and the most painful thing (for me) is that they won’t cross it anytime soon!

One of the interesting moments in the interview is when one of the interviewers told Green that perhaps after the impending economic collapse, when Mexican migrants (or migrants from any country) are starving at the doorstep of a white family’s home, values might start to change. Good point! But for people who were tortured by the System to the breaking point, like Benjamin and me, we no longer need that fallen future to transvalue the values of our abusive parents (this is why our publishing house will also be publishing our tragic autobiographies).

Anyway: having said that about Adam Green, I’d like to share my thoughts on what I’ve read of The Jesus Deception. I’ve already read the first chapter, from which I’d just like to share this quote:

Once a people are regarded as the prophets of God [referring to the Jews], they are elevated to a godlike status themselves.

In the interview, one of the interviewers (two brothers, by the way) seemed to have a good instinct for taking baby steps to continue crossing the Rubicon, the one that lay in the middle. The other said that we need a New Story. I already said that a few years ago. We can’t move forward with the Old Story, the one still held by Christian racialists. They are so dishonest that they are incapable of detecting the Orwellian doublethink in their thinking: being wise in the JQ while simultaneously elevating Jewry to divine status, since by worshipping their god they validate their scriptures (including the gospel’s psyop directed at us Gentiles!). And what’s worse: the Christians of the American racial right, traitors in my opinion, dislike “paganism” (a pejorative term for the authentic spirituality of the Aryan man).

What is happening in 2026, in the American-Zionist war against Iran for example, is largely a result of Judeo-Christianity: something that not only white nationalists, but Americans in general cannot, are incapable of, seeing.

The quote I made above from the first chapter of The Jesus Deception is vital to understanding why on this site I focus on the religion of our parents, and on the need for a complete apostasy. It’s not enough to simply “burn all the Bibles” in the US, as Green jokingly said in the interview, but—and this is infinitely more important—to give up Christian ethics, the psyop.

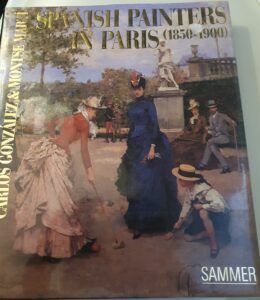

I was browsing around a second-hand charity shop bookstore the other day, and picked this book up (attached picture) for a few pounds. I’ve now examined it throughout twice (so far), and found some of my favourite paintings (I like most of them, and there are many more… one good formal ball scene was spoiled by the presence of a nigger serving boy).

I was browsing around a second-hand charity shop bookstore the other day, and picked this book up (attached picture) for a few pounds. I’ve now examined it throughout twice (so far), and found some of my favourite paintings (I like most of them, and there are many more… one good formal ball scene was spoiled by the presence of a nigger serving boy).